AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Ibm speech to text tutorial8/26/2023

So English audio would be transcribed to English text or captions, while Italian audio would be transcribed into Italian text. Right now the system can recognize the following languages:īeing a supported language means that the technology can be set to recognize audio in that language and transcribe it.

This was expanded to support 11 different languages in 2020. Supported languagesĪt launch, this feature supported 7 different languages, with English variants for either the United Kingdom or the United States. This process takes roughly the length of the video to transcribe, producing quick, useable captions. If the video is selected as being in a supported language, Watson will automatically start to caption the content through using speech to text. To convert video speech to text, content owners simply need to upload their video content to IBM’s video streaming or enterprise video streaming offerings. Through this process, it will apply this added knowledge retroactively, so if clarity to an earlier statement is introduced toward the end of the speech Watson will go back and update the earlier part to maintain accuracy. As the transcription process is underway, Watson will continue to learn as more of the speech is heard, providing additional context. IBM Watson uses machine intelligence to transcribe speech accurately through combining information about grammar and language structure with knowledge about the composition of the audio signal. Additional professional services available for captioning.Integrated live captioning for enterprises is also available, although differs in several ways from the VOD feature talked about here. It has recently been expanded to recognize additional languages. This was added to IBM’s video streaming solutions in late 2017 for VODs (video on-demand). To address this, IBM introduced the ability to convert video speech to text through IBM Watson. The process offers content owners a way to quickly and cost effectively provide captions for their videos. This the ability to identify words and phrases in spoken language and convert them to text. However, caption generation can be time consuming, taking 5-10 times the length of the video asset, or costly if you are paying someone else to create them.Ī solution is automatic speech recognition from machine learning. These reasons, along with regulations such as the Americans with Disabilities Act and rules from the FCC, have realized the need to caption video assets.

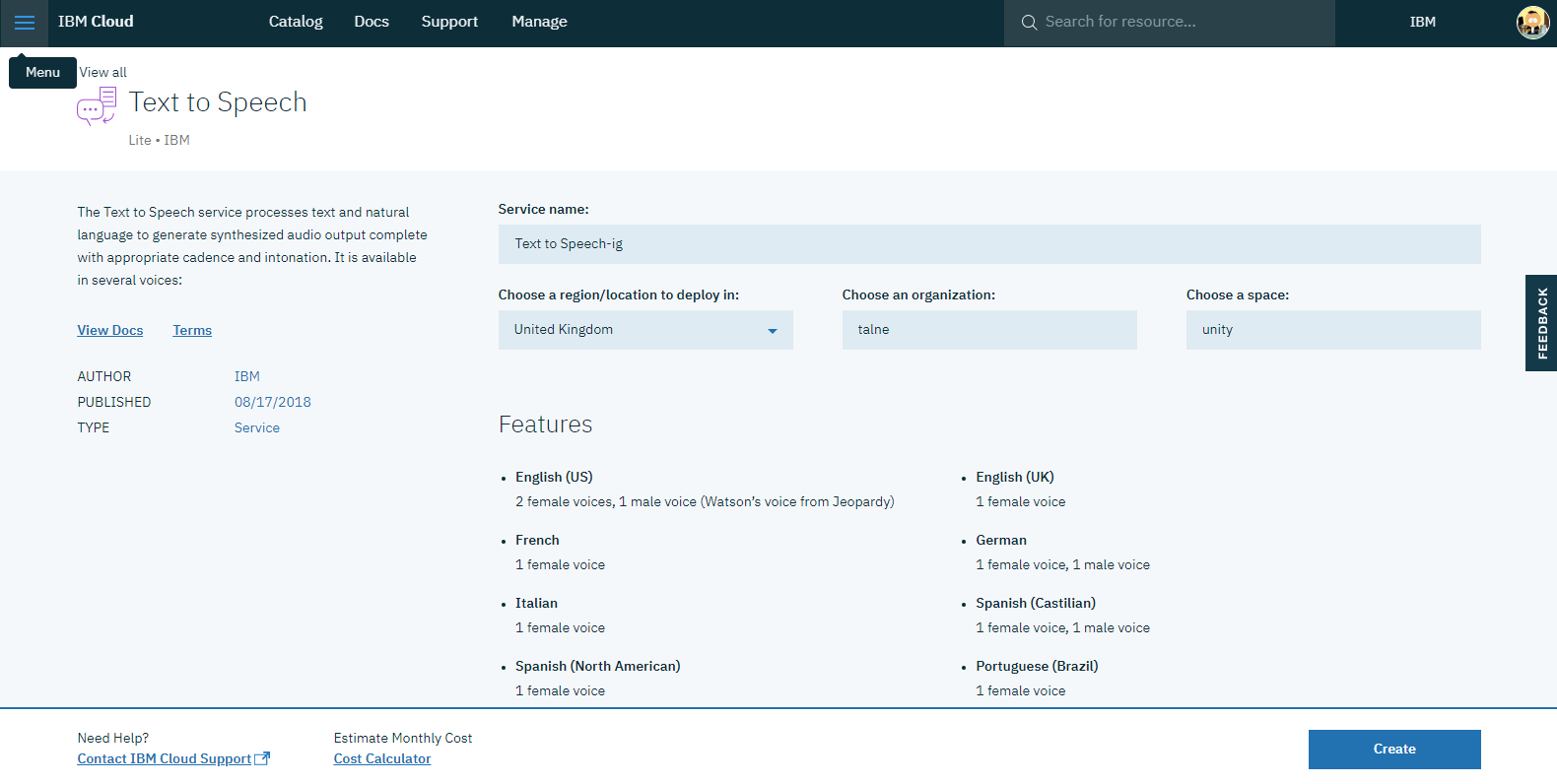

Not only that, but Facebook found out that adding captions to a video increased view times on their network by 12%. While they assist deaf and hard of hearing people in enjoying video content, a study in the UK discovered that 80% of closed caption use was from those with no hearing issues. In the tool, the name of an entity is always prefixed with the character.Closed captions have grown to be an important part of the video experience. By recognizing the entities that are mentioned in the user’s input, the Watson Assistant service can choose the specific actions to take to fulfill an intent. You list the possible values for each entity and synonyms that users might enter. The following are examples of intent names.Īn entity represents a term or object that is relevant to your intents and that provides a specific context for an intent. Simply put, intents are the intentions of the end-user. In the tool, the name of an intent is always prefixed with the # character. By recognizing the intent expressed in a user’s input, the Watson Assistant service can choose the correct dialog flow for responding to it. You define an intent for each type of user request you want your application to support. Copy and save the Workspace ID for future reference.Īn intent represents the purpose of a user’s input, such as answering a question or processing a bill payment. Icon on the Ana workspace to View details of the workspace. Navigate to Manage on the left pane, click on Launch tool to see the Watson Assistant dashboard. Save the credentials in a text editor for quick reference. Click Create.Ĭlick Service credentials on the left pane and click New credential to add a new credential.Ĭlick View Credentials to see the credentials. Go to the IBM Cloud Catalog and select Watson Assistant service > Lite plan under Watson. A workspace is a container for the artifacts that define the conversation flow.įor this tutorial, you will save and use Ana_workspace.json file with predefined intents, entities and dialog flow to your machine. To begin, you will create Watson Assistant service on IBM® Cloud and add a workspace. Create a chatbot: create a workspace, define an intent and entity, build the dialog flowĪllow end users to interact with chatbot using voice and audio

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed